Nov 13, 2007

Furry Modulator

…………………………

-Playing on air

A Spatial Instrument

-a variation of the famous ‘Theremin’ by the Russian inventor Léon Theremin (1919)

Inputs

-distance

-sectors in the visual field

-ripple gestures

Processing

-movement through the sectors and steps builds up a sequence

-making a ripple gesture triggers a chain reaction of samples in the stack

-also a single sample can be triggered

-distance affects the volume and pitch of the ambient background tone

-a combination of distance and spatial grid selects a sample from the pool

-temporal effects on volume and filtering for the samples in a chain reaction

Outputs

– MIDI events into Reason (possibly)

– samples loaded inside PD

– stereo sound through loudspeakers

Interface

Bodily movement in space for a single person using 2 hands and ripple gestures controlling speed, pitch and the triggering of (stacked) sample sequences.

Possible extras

Physical presentation of the instrument with a tangible object/character.

Led lights in the tangible object provide feedback

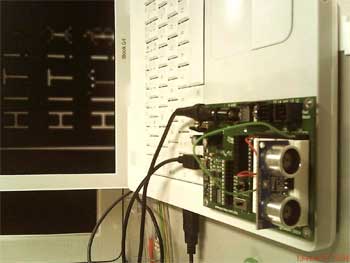

Tech

Basic STAMP

1x Ultrasound sensor

1x webcam

Mac OS X

active monitors

Platform

Pure Data

ARToolkit for motion detection in the visual field

Inspirational image for the tangible interface:

Santamoth

Day 2

Sensor day. Progress! The PING))) Ultrasonic sensor we will use with our Furry Modulator now captures the distance nicely with a treshold, and we could measure the scope of it’s detection field. About 15 degrees for the cone radius of the detection field will be enough for our project. It seems like we should use some round object in the playing hand(s), a ball maybe, to improve the reception just like the schematic for the sensor says.

Some issues coming up while learning about ultrasonic detection: Background interference could cripple our instrument. The playing field needs to be fairly even on the background, and body detection must be avoided when acting out with the hands. Depending on the setup and the space, these problems could disappear.

-frequency must be high, because its a realtime instrument: sampling faster than 20ms

-therefore, the signal should be carefully interpolated: smoothed out between input cycles.

13.2007 15:00

Day 3 : communicating between BS2 and apps

A big hairy problem for me ( the tech guy ) was getting the serial communication protocol to work between Basic Stamp 2 and Pure Data, since I have no heavy engineering or programming experience. Finally it turned out, that the only problem was the build / version of pd-extended on my Mac OS X. Changing to the wintel fixed the issue, because I could find the Comport object for PD that handles generic serial port input.

Getting lost, I learned a lot about serial comm. and networking in PD, so it was a great learning experience.

There is a great IRC channel at irc.freenode.net called #dataflow. Some friendly spirit there called Wim helped me out and cured my noobie blindness like a snap.

The missing comport object caused a huge gap in our schedule. We have no patch in PD yet, and the camera input is still missing. Also my Logitech so-called-pro webcam wasn’t running on the Mac. Pssht.

— Problems are like food for the hungry developer —

Day 4 : Pure Data patch

Desperate times need desperate measures, so I switch both the operating system and the microcontroller while trying to interpret the serial port data. Of course, later I realized it was completely unnecessary and all we need is a solution inside the software, but then it was too late already: an entire day wasted.

16.00 .. with a helper patch from Koray Tahiglou, the pd software can now receive serial data from the ultrasound sensor in the correct format so it is possible to move on to the implementation in pd.

Evening time: Based on our design, pure data now handles the incoming signal and does various things with it.

– filtering out the near and the far from the distance reading

– getting a target value and interpolating the drone value w. gravity

– dividing the distance values into 4 equal zones and storing the number

– a timer and a delay of 250ms together capture the moment,

when the sound artist’s hand leaves the signal beam:

a sample index 0, 1, 2 or 3 can be sent separately to the output.

– a netsend object to move the drone value output to the other laptop,

that handles more signal processing and the sound synths in Reason.

The patch is ready around midnight, and only connectivity is still missing.

Day 5 : presentation

Registering the computers for the physical network and testing the netsend / netreceive object is finally a success. We hook up the instrument, play out some sounds on video, and prepare for the presentation. I leave at 11.30 to teach a course in Lahti, so Atle handles the presentation alone. Sorry about that ..

We were both happy to get a working instrument that can be played intuitively. Choosing the samples to be played are part controlled by distance of the gesture trigger, partly random selections from a bank in Reason. Doing more hands-on testing would have enabled us to tweak the samples and drones so they would be more responsive for the user who plays the instrument without knowledge of how the system works.

happy go go,

.Miko

…

Atle Larsen & Mikko Mutanen